Most developers give AI a prompt and hope for the best. I learned this the hard way when my AI-generated code worked perfectly but violated every architectural standard we had established. The functionality was correct, but the governance was nonexistent.

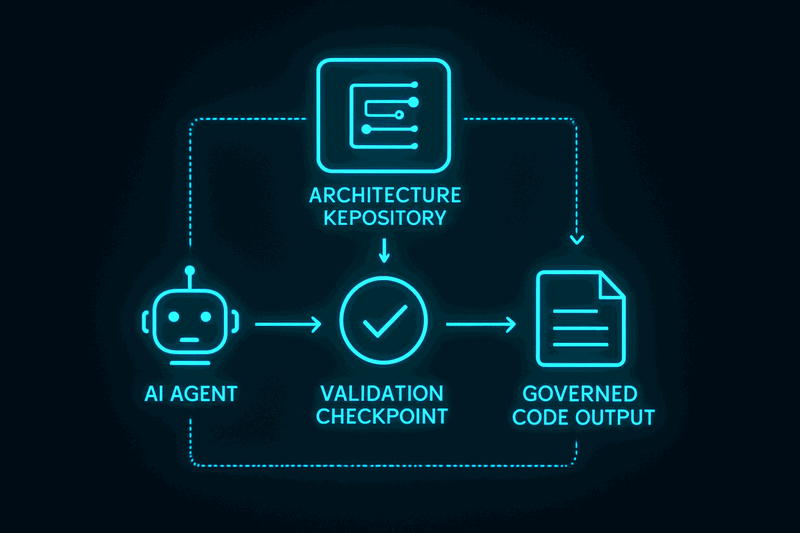

This article explores how enterprise architects can use TOGAF's Architecture Repository structure as a governance framework for AI-assisted development. Rather than hoping AI outputs meet your standards, you can provide architectural context that ensures compliance from the start.

If you are an enterprise architect, solution architect, or technical lead working with AI systems, this framework will help you bridge the gap between AI capability and enterprise governance. You will learn how to structure your architectural knowledge so AI can consume and respect your constraints.

The Governance Gap in AI Development

AI development creates a unique governance challenge. Traditional software development has established review processes, coding standards, and architectural oversight. AI-assisted development often bypasses these controls in favor of speed and experimentation.

The result is what I call "vibe coding" — development that produces functionally correct code without architectural discipline. The AI generates working solutions, but they accumulate technical debt, violate design principles, and create maintenance nightmares.

In my experience building document processing systems, I watched teams generate thousands of lines of AI-assisted code that worked in isolation but failed to integrate with existing enterprise patterns. Error handling was inconsistent. Logging standards were ignored. Dependency boundaries were violated.

The problem was not the AI capability — it was the absence of architectural context. We were giving AI features to implement without giving it constraints to follow. Hope is not a governance strategy.

Architecture Repository as AI Context

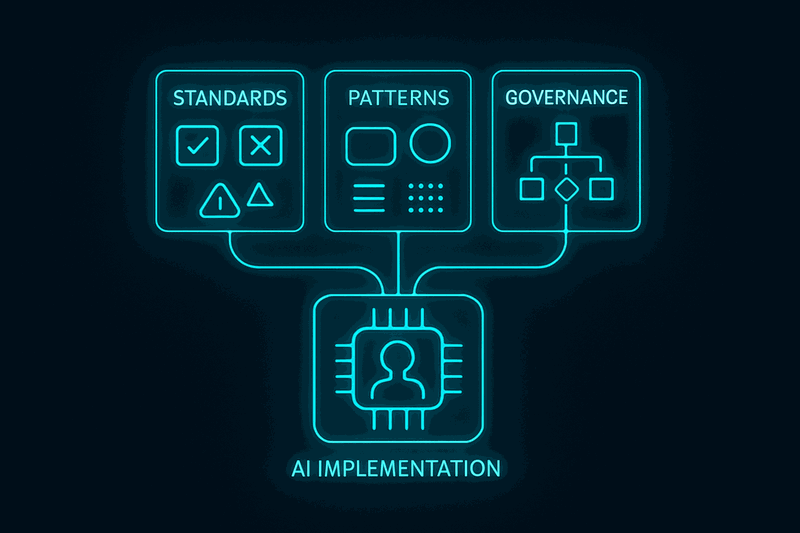

The solution emerged from recognizing that TOGAF's Architecture Repository provides exactly the structure needed to govern AI development. The repository organizes architectural knowledge into three categories that map perfectly to AI governance needs:

Standards Information Base contains the mandatory constraints (MUST requirements) that AI outputs cannot violate. These become the constitutional rules for AI implementation.

Reference Library contains the recommended patterns (SHOULD guidance) that AI can adapt based on context. These provide flexibility within architectural boundaries.

Governance Log contains the architectural decisions (ADRs) that explain why certain patterns exist. This provides context that helps AI make appropriate trade-offs.

When I restructured our architectural documentation into this repository format, something remarkable happened. AI outputs became architecturally consistent. The repository became the governance layer that bridged AI capability with enterprise standards.

The key insight was treating the Architecture Repository not as documentation but as a knowledge base that AI could consume and respect.

Standards Information Base for AI Governance

The Standards Information Base transforms architectural requirements into AI constraints. Rather than hoping AI follows your patterns, you explicitly define what compliance means using normative language.

Consider this example from our document processing system:

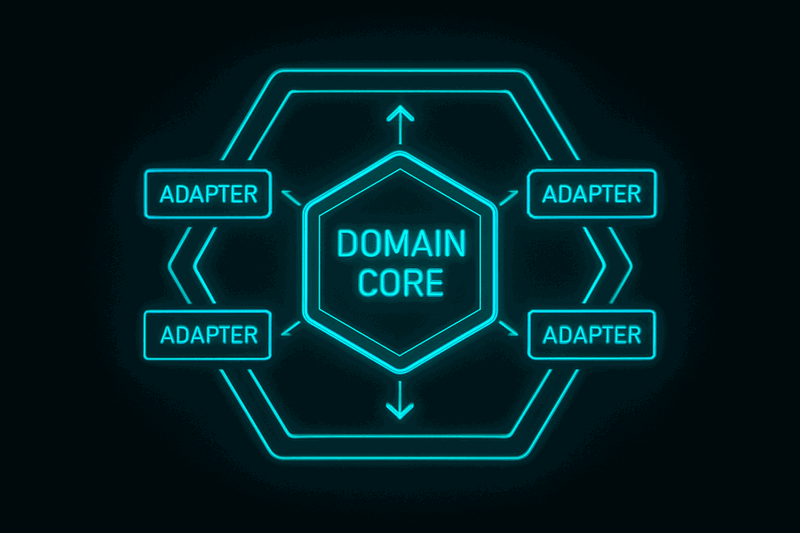

Application Design Standard (STD-DES-001)

- The service MUST implement hexagonal architecture with clear domain boundaries

- External dependencies MUST be injected through ports and adapters

- The service SHOULD use structured logging with correlation identifiers

- Error responses MAY include debug information in development environments

This standard uses RFC 2119 normative language that AI actually respects. MUST requirements produce different outputs than SHOULD recommendations. The specificity creates constraints that prevent architectural drift.

When I included this standard in AI prompts, the generated code followed hexagonal boundaries, implemented proper dependency injection, and included structured logging. The first time an AI output required zero architectural corrections.

The Standards Information Base works because it provides explicit constraints rather than implicit expectations. AI needs to know what architectural compliance looks like before it can deliver compliant code.

Reference Library as Pattern Guidance

The Reference Library provides AI with proven patterns that can be adapted to specific contexts. Unlike standards, which are mandatory, reference patterns offer flexibility within architectural boundaries.

Think of standards as recipes that must be followed exactly, while the reference library is like a pantry of ingredients and techniques that can be combined based on the situation. AI needs both: mandatory patterns for consistency and optional patterns for flexibility.

Our reference library included:

Error Handling Patterns — Classification schemes for different error types, retry strategies, and fallback mechanisms

Integration Patterns — Event-driven architectures, API design patterns, and data transformation approaches

Testing Patterns — Unit testing strategies, integration test frameworks, and validation approaches

When AI encountered a situation covered by reference patterns, it could adapt the approach rather than inventing something new. This reduced inconsistency while preserving flexibility.

The Constitution vs Laws analogy applies here. Standards are constitutional constraints that cannot be violated. Reference patterns are laws that should be followed but can be adapted. Both provide governance without requiring human review of every decision.

Governance-as-Code Implementation

The Architecture Repository enables what I call Governance-as-Code — implementing architectural oversight through automated validation rather than manual review processes. Standards become validation rules. Compliance becomes testable.

This approach scales governance without creating bureaucracy. Instead of reviewing every AI output manually, you define validation rules that catch violations before merge. The validation results can even provide feedback for AI retry attempts.

In our document processing system, we implemented validation that checked:

- Dependency boundary violations (hexagonal architecture compliance)

- Logging format consistency (structured logging standard)

- Error handling patterns (classification and retry logic)

- API contract adherence (interface design standards)

When AI generated code that violated these constraints, the validation framework provided specific feedback about what needed correction. This created a feedback loop that improved AI outputs over time.

The Governance Log (ADRs) provided crucial context for these validations. Rather than just flagging violations, the system could explain why certain patterns were required, helping both AI and developers understand the architectural reasoning.

Practical Implementation Strategy

Setting up an Architecture Repository for AI governance requires careful prioritization. Start with standards before patterns, and focus on the constraints that matter most for your context.

Phase 1: Core Standards

Begin with 2-3 critical standards that address your biggest AI governance gaps. In our case, this was application design (hexagonal architecture) and logging (structured format). Write these with explicit normative language and include compliance checklists.

Phase 2: Reference Patterns

Add proven patterns that AI can adapt. Focus on areas where you want consistency but not rigidity. Error handling, integration approaches, and testing strategies work well here.

Phase 3: Governance Integration

Link ADRs to standards and patterns to provide decision context. This helps AI understand not just what to implement, but why certain approaches were chosen.

Phase 4: Validation Automation

Implement automated checking of standards compliance. Start simple — dependency analysis, format validation, pattern recognition. Build complexity gradually.

The key is treating this as an architectural discipline, not a documentation exercise. The repository exists to govern AI implementation, not to impress with theoretical completeness.

Integration with AI Development Workflow

The Architecture Repository integrates into AI development through prompt structure and validation feedback. Instead of ad-hoc prompts, you provide systematic context that includes relevant standards, patterns, and decision history.

Prompt Structure:

1. Repository Context (relevant standards and patterns)

2. Specific Request (what you want AI to implement)

3. Validation Criteria (how compliance will be checked)

This approach transforms AI from an ungoverned code generator into a constrained implementer that respects architectural boundaries. The repository provides the constraints that make AI outputs governable.

The validation feedback creates a continuous improvement loop. When AI generates code that violates standards, the specific violations become input for retry attempts. Over time, this improves both the AI outputs and the clarity of your architectural constraints.

Conclusion

Architecture discipline does not disappear with AI — it becomes the governance layer that ensures AI-generated code meets enterprise standards. The Architecture Repository provides a proven structure for organizing this governance without creating bureaucracy.

The transformation from ungoverned AI outputs to architecturally compliant code requires treating the repository as a knowledge base rather than documentation. Standards become constraints, patterns become guidance, and ADRs become decision context that AI can consume and respect.

Your first step is creating one AI-focused standard. Pick a pattern you want AI to follow consistently — logging, error handling, or code structure. Write it with normative language and include it in your next AI prompt. The difference will be immediate and measurable.

Watch the video for the complete story of how this approach evolved from ungoverned AI chaos to systematic architectural governance: https://www.youtube.com/watch?v=j8y3YccEJp0

What is the first standard you would write for AI-governed development? Share your approach in the comments below.

Resources

- Video: https://www.youtube.com/watch?v=j8y3YccEJp0

- Related: I Applied TOGAF to 4 Teams - Here's What Actually Worked

Watch the video for the personal stories behind this framework: https://www.youtube.com/watch?v=j8y3YccEJp0

How do you currently govern AI-generated code in your organization?