Have you ever written "the service should use structured logging" in a prompt, only to find AI treating "should" as optional? You are not alone. The gap between writing standards and AI compliance is not about AI unreliability — it is about imprecise constraint language. When we apply RFC 2119 normative language to AI development standards, compliance jumps from inconsistent to predictable.

This article expands on my video about writing standards that AI can actually follow. We will explore how explicit MUST, SHOULD, and MAY constraints produce consistently governed outputs, while vague guidance produces vague results. The skills architects already have for writing standards directly apply to AI governance — they just need the right format.

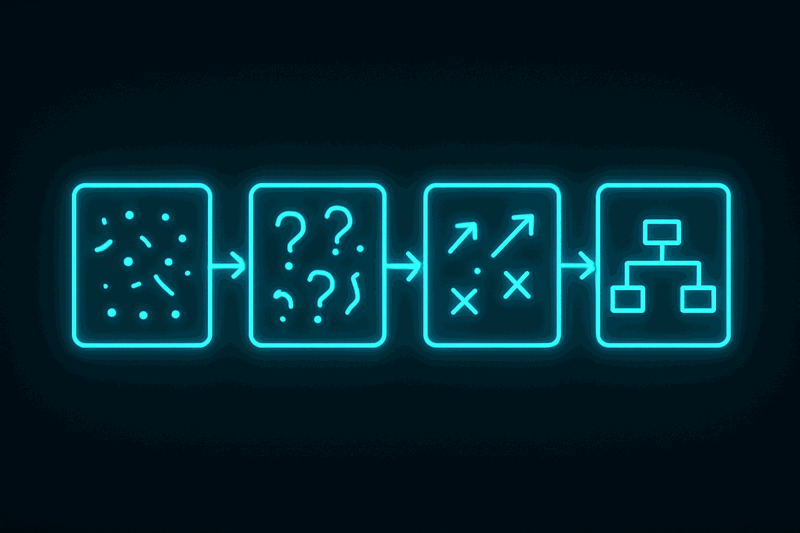

The Problem with Vague Standards

Most architecture standards fail with AI because they use recommendation language instead of requirement language. When we write "services should follow best practices" or "consider using structured logging," we create wiggle room that AI interprets as optional behavior.

This problem becomes critical in enterprise environments where governance cannot be optional. Inconsistent AI outputs mean inconsistent code quality, missed security requirements, and compliance failures. The solution is not more detailed prompts — it is more precise constraint language.

The breakthrough comes from recognizing that AI models are trained on technical documentation that uses RFC 2119 normative language. They understand the hierarchy. When we write "the service MUST use structured logging," AI treats this as a mandatory constraint. When we write "the service SHOULD follow SOLID principles," AI treats this as default behavior with flexibility for valid exceptions.

Understanding RFC 2119 Normative Language

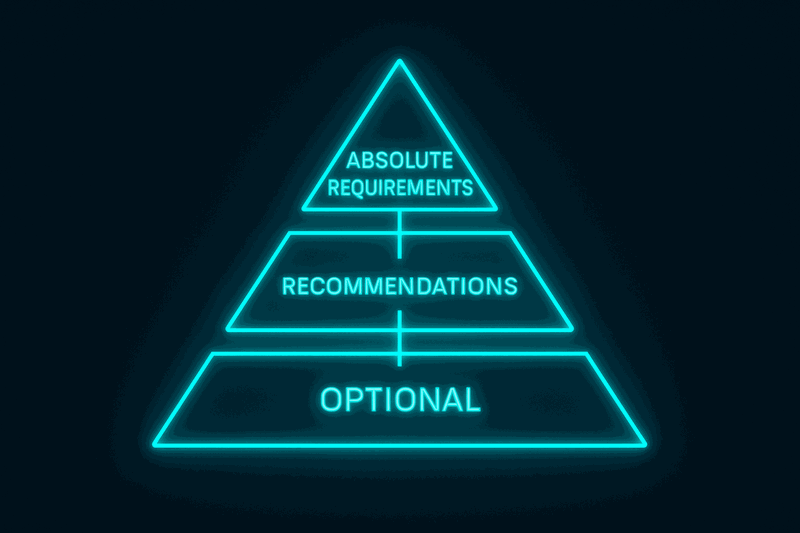

RFC 2119 defines a formal vocabulary for expressing requirement levels in technical specifications. This is the same language used in internet standards, TOGAF frameworks, and enterprise architecture documents. The hierarchy is simple but powerful.

MUST indicates absolute requirements. These are non-negotiable constraints that AI will treat as mandatory. Use MUST sparingly for critical requirements like security boundaries, data integrity, or regulatory compliance. When AI sees MUST, it produces binary compliance — the requirement is either present or the output is invalid.

SHOULD indicates strong recommendations with valid exceptions. These represent best practices that AI will apply by default but can deviate from when circumstances justify it. Use SHOULD for architectural patterns, coding standards, and design principles. AI treats SHOULD as default behavior with intelligent flexibility.

MAY indicates truly optional behavior. These are enhancements or alternatives that AI will include when contextually appropriate. Use MAY for optimization opportunities, alternative implementations, or feature additions that depend on specific use cases.

The power of this hierarchy is that AI models already understand it. They have been trained on thousands of RFCs, standards documents, and technical specifications that use this language. We do not need to teach AI what MUST means — we just need to use it consistently.

Consider the difference between these two standards:

Vague version: "Services should use structured logging and consider including correlation IDs for traceability."

Normative version: "The service MUST use structured logging. Log entries MUST include correlation_id field. Services MAY include additional context fields."

The second version produces predictable compliance because AI understands exactly what is required versus what is optional.

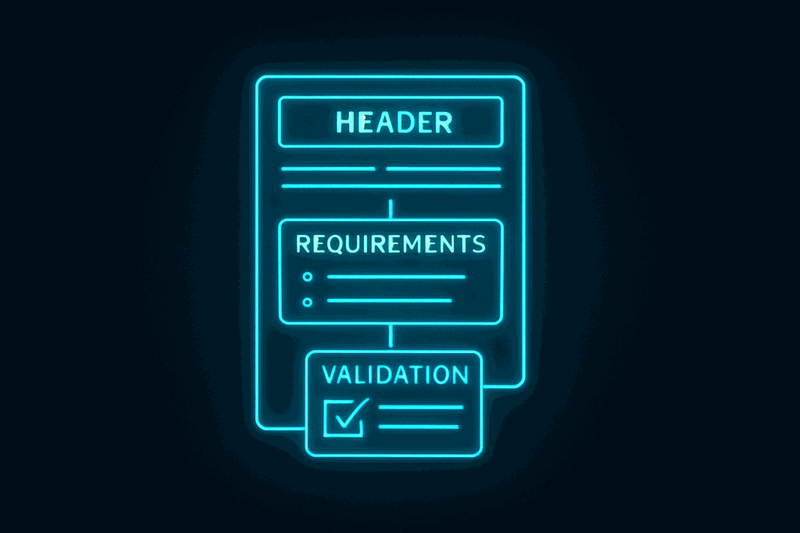

Standard Document Structure That Works

Normative language alone is not enough. AI needs structured documents that it can parse and reference systematically. The most effective structure includes five key sections: Purpose, Scope, Normative Language Declaration, Requirements, and Compliance Checklist.

Purpose explains why the standard exists. This helps AI understand the intent behind requirements and make better decisions about edge cases. Keep purpose statements concise but specific about the problem being solved.

Scope defines what the standard applies to. Be explicit about boundaries. "This standard applies to all document processing services" is clearer than "this standard applies to relevant services." AI needs unambiguous scope to know when to apply requirements.

Normative Language Declaration explicitly states that the document uses RFC 2119 keywords. This primes AI to interpret MUST, SHOULD, and MAY correctly. Include a brief explanation: "MUST indicates absolute requirement. SHOULD indicates recommendation. MAY indicates optional."

Requirements organize constraints by category using normative language. Group related requirements together. Use bullet points or numbered lists for scannability. Avoid burying requirements in paragraphs where AI might miss them.

Compliance Checklist provides validation criteria that double as AI guidance. Each checklist item should be verifiable and linked to a requirement level. This section transforms the standard from documentation into actionable validation.

Here is a template structure that consistently works:

# Standard Name

**Standard ID**: STD-XXX-001

**Version**: 1.0

**Status**: Active

## Purpose

[Why this standard exists - one paragraph]

## Scope

[What this standard applies to - specific boundaries]

## Normative Language

This standard uses RFC 2119 keywords. MUST indicates absolute

requirement. SHOULD indicates recommendation. MAY indicates optional.

## Requirements

### Architecture

- The service MUST implement hexagonal architecture boundaries

- The service SHOULD follow SOLID principles

- The service MAY use additional design patterns

### Logging

- The service MUST use structured logging

- Log entries MUST include correlation_id field

- Services MAY include additional context fields

## Compliance Checklist

| Check | Requirement Level |

|-------|-------------------|

| [ ] Hexagonal boundaries implemented | MUST |

| [ ] Structured logging present | MUST |

| [ ] SOLID principles applied | SHOULD |

This structure enables AI to find relevant requirements quickly and apply them systematically. The compliance checklist becomes both validation criteria and AI guidance.

Balancing Constraint Density

The biggest mistake in writing AI-focused standards is getting constraint density wrong. Too many MUST requirements create over-constrained systems that limit valid solutions. Too few constraints produce inconsistent outputs that defeat the purpose of standards.

Constraint Density is the balance between specificity and flexibility. Optimal constraint density gives AI clear boundaries while allowing appropriate creativity. The key is using each normative level strategically.

Use MUST sparingly for requirements that are truly non-negotiable. Security boundaries, data integrity rules, and regulatory compliance typically qualify as MUST requirements. If you find yourself writing many MUST statements, step back and consider whether some could be SHOULD requirements instead.

Use SHOULD for architectural patterns and best practices that represent good defaults but might have valid exceptions. Most design principles, coding standards, and performance guidelines work better as SHOULD requirements. This gives AI flexibility to adapt to specific contexts while maintaining consistency.

Use MAY for features and optimizations that add value in specific situations but are not universally applicable. Optional caching, alternative algorithms, or enhanced monitoring typically belong in the MAY category.

The traffic law analogy helps calibrate constraint density. MUST is like a red light — you stop, no exceptions. SHOULD is like a speed limit — you follow it but circumstances might justify deviation. MAY is like "right turn on red permitted" — you can if conditions are appropriate.

Test constraint density by observing AI output variation. If outputs are too similar, you might be over-constraining. If outputs vary wildly, you might be under-constraining. The goal is consistent compliance with appropriate adaptation to context.

Building codes provide another useful model. They specify "load-bearing walls MUST support X weight" for safety-critical requirements, "electrical outlets SHOULD be spaced every 12 feet" for recommended practices, and "ceiling height MAY exceed 8 feet" for optional enhancements.

Practical Implementation Guide

Writing your first AI-focused standard requires picking the right pattern to standardize. Start with something you want AI to follow consistently — logging, error handling, or naming conventions work well for initial experiments.

Begin with a simple structure. Define the purpose in one sentence. Specify the scope clearly — what services or components does this apply to? Declare normative language usage so AI interprets requirements correctly.

Write requirements in categories using explicit normative language. Replace every instance of "should consider" with either "MUST," "SHOULD," or "MAY" based on actual requirement level. Be specific about what compliance looks like.

Create a compliance checklist with verifiable items. Each checklist item should connect to a specific requirement and indicate whether it is MUST, SHOULD, or MAY. This checklist becomes your validation criteria.

Test the standard by including it in AI prompts and observing compliance. If MUST requirements are sometimes missing, the language might not be explicit enough. If SHOULD requirements never appear, they might be too vague or contextually inappropriate.

Iterate based on AI outputs. Standards are living documents that improve through usage. Track which requirements AI follows consistently and which need clarification or restructuring.

Common mistakes include using normative language inconsistently, burying requirements in prose instead of making them scannable, and creating checklists that are not actually verifiable. Avoid these by keeping structure consistent and language explicit.

The goal is not perfect compliance on the first attempt. The goal is systematic improvement in AI output consistency through explicit constraints that AI can understand and follow.

Measuring Success and Iteration

Effective AI standards produce measurable improvements in output consistency. Track compliance rates for MUST requirements — these should approach 100% when language is sufficiently explicit. Monitor SHOULD requirement adoption — these should appear in most outputs unless context justifies deviation.

Document patterns where AI struggles with compliance. These often indicate unclear scope, ambiguous language, or constraint density issues. Use these patterns to refine standards systematically.

Consider the broader governance implications. Standards that AI follows consistently enable governance without approval bottlenecks. Teams can develop with confidence that outputs meet architectural requirements without manual review of every decision.

The ultimate measure of success is whether the standard reduces architectural corrections in AI-generated code. When standards work properly, AI outputs require minimal architectural review because constraints are built into the generation process rather than applied afterward.

Conclusion

Writing standards that AI can follow transforms architecture governance from reactive correction to proactive constraint. The skills architects already have for writing standards directly apply to AI development — they just need RFC 2119 normative language and structured document format.

Explicit constraints produce consistent outputs. Vague guidance produces vague results. The difference between "should use structured logging" and "MUST use structured logging" is the difference between optional suggestion and mandatory compliance.

Start with one pattern you want AI to follow consistently. Structure it with Purpose, Scope, Requirements using MUST/SHOULD/MAY, and Compliance Checklist. Include it in your next AI prompt and observe the difference. The improvement in consistency will be immediate and measurable.

Standards become AI governance mechanisms when they use language AI understands. RFC 2119 normative language is that bridge between human intent and AI compliance.

Resources

- Video: https://www.youtube.com/watch?v=SU77x7a-GYM

- External: RFC 2119 (Key words for use in RFCs to Indicate Requirement Levels)

Watch the video for the complete walkthrough of writing your first AI-focused standard: https://www.youtube.com/watch?v=SU77x7a-GYM

What pattern would you standardize first for AI development? Share your thoughts in the comments.