Someone recently told me that model-level gradient descent on regulation data makes architectural governance unnecessary. That is like saying airbags make traffic laws optional. Both save lives, but they operate at completely different layers of the system.

This comment exposed a dangerous assumption spreading through our industry: that technical controls at the model layer constitute enterprise AI governance. The data tells a troubling story. 88% of organizations use AI, yet only 25% have governance programs. That 63% gap represents organizations with technical controls but no governance structures.

In this guide, I will show you the distinction between model guardrails and enterprise ai governance, why major regulatory frameworks position governance upstream from technical controls, and how to diagnose which governance layers your organization actually has versus which you assume exist.

The Fundamental Distinction: What vs How

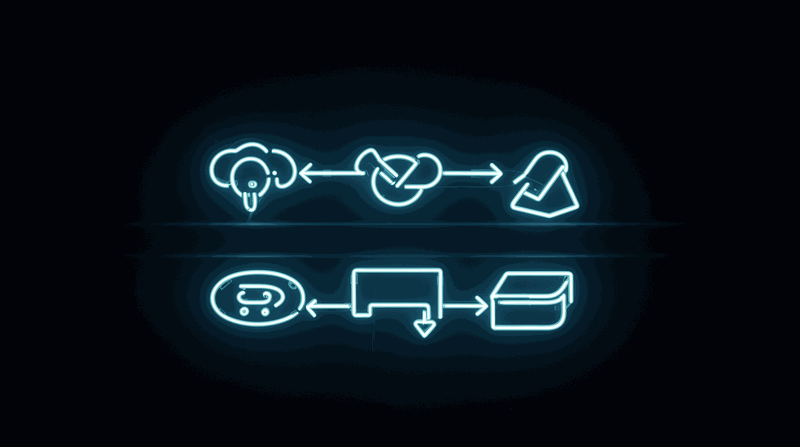

The confusion starts with a category error. Model guardrails and enterprise governance operate at different layers with different purposes. Understanding this distinction is critical for architects responsible for ai governance strategy.

Governance decides WHAT. Guardrails enforce HOW.

Governance determines what AI systems should exist, what risk classification they carry, what standards they must meet, and who is accountable for their outcomes. These are organizational decisions made before deployment.

Guardrails are technical mechanisms that enforce constraints during model operation: inference-time filtering, content policies, output validation, and prompt sanitization. These are technical controls activated during deployment.

Think about building codes versus physical barriers. Building codes decide whether a balcony can exist, how high the building can be, what materials are permitted, and who is accountable for safety. Physical guardrails are the barriers that prevent people from falling off the balcony once it exists.

Nobody would argue that installing guardrails on a balcony eliminates the need for building codes. Yet organizations routinely argue that installing model guardrails eliminates the need for enterprise ai governance.

The Audit That Revealed Empty Governance

A large financial services organization deployed AI-powered fraud detection and customer service chatbots. IT leadership reported "full compliance" based on vendor guardrail dashboards showing green status across all models. Internal audit requested AI governance documentation for annual review.

The auditors asked for AI system inventory, architectural decision records, standards conformance evidence, and accountability mapping. The vendor dashboard showed output filtering was active and content policies were enforced. But it could not produce an inventory, decision records, standards conformance, or accountability mapping.

The architect recognized the gap: model guardrails were operating correctly at the technical layer, but organizational policy, architectural governance, and application governance had no documented structures. Emergency remediation took three months to create AI inventory, conduct risk classification workshops, establish ADR practice for AI decisions, and map standards to AI systems.

The lesson: model guardrails report on what the model does. Governance artifacts document what the organization decided, why, and who is accountable. Different questions require different answers from different layers.

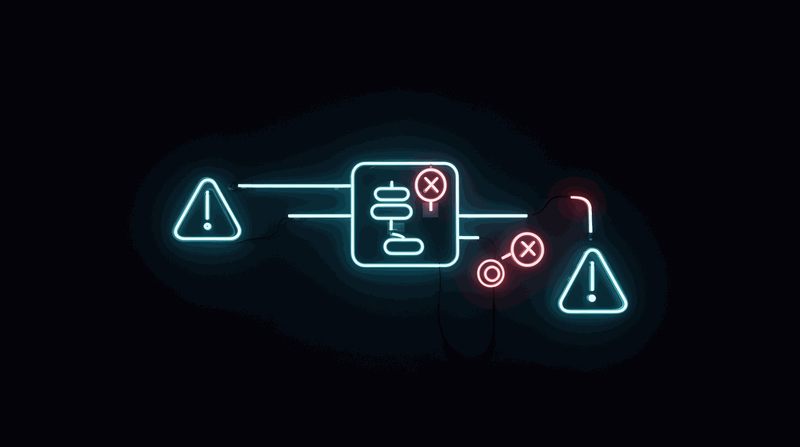

Vendor Dependency: When Someone Else Controls Your Compliance

The second dangerous assumption is treating vendor-provided guardrails as enterprise governance. Vendor controls reflect the vendor's risk assessment, not your organization's specific governance needs.

Vendor controls are not enterprise governance.

InformationWeek research confirms that vendor guardrails can change without notice as part of routine business decisions. Your organization's safety posture becomes dependent on vendor priorities that may not align with your risk tolerance or regulatory requirements.

This creates the same mistake organizations made with security architecture in 2005: outsourcing security policy to firewall vendors, then discovering that "firewall configuration" is not "security architecture."

The Vendor Update Nobody Expected

A healthcare AI provider used vendor LLM with built-in safety guardrails for clinical decision support. The organization relied on vendor guardrails as their compliance mechanism for medical content accuracy and patient safety.

The vendor updated model safety parameters as part of routine improvement. The model became more conservative in clinical responses, refusing to provide differential diagnosis suggestions it had previously offered and flagging routine medical terminology as potentially harmful.

Clinical workflows were disrupted. The healthcare organization had no documented governance requirements independent of vendor guardrails. They had assumed "vendor handles compliance" without specifying their own requirements for what the AI system MUST, SHOULD, and MAY do.

The architect established governance requirements using RFC 2119 normative language: documented what the AI system MUST provide, what it SHOULD do, and what it MUST NOT do. These requirements existed independently of vendor guardrail implementation.

With documented governance requirements, the organization could evaluate vendor updates against their own standards rather than accepting changes as default governance. The vendor update was appropriate for general consumers but too restrictive for clinical use.

The lesson: when you outsource governance to a vendor, you outsource control over your compliance posture. Independent governance requirements persist regardless of vendor changes.

What Governance Actually Requires According to Major Frameworks

The regulatory landscape makes the distinction clear. Major frameworks position governance upstream from technical controls, not as a substitute for them.

Governance is upstream, not downstream.

NIST AI Risk Management Framework

The NIST AI RMF defines four core functions: Govern, Map, Measure, and Manage. The GOVERN function spans the entire AI lifecycle, establishing the foundation for all other activities. It requires organizational structures, policies, and processes before any technical implementation.

The framework explicitly states that governance activities occur before and during AI system development, not just during operation where guardrails function.

EU AI Act Requirements

The eu ai act requires risk-based classification before deployment, documentation of AI system purposes and limitations, and organizational accountability structures. These are governance requirements that must exist independently of technical controls.

ISO 42001 provides the management system framework with five pillars: Transparency, Accountability, Human Oversight, Data Governance, and Continual Improvement. None of these pillars can be satisfied by model-level controls alone.

The Integration Challenge

All three frameworks require artifacts that model guardrails cannot produce:

- AI system inventory with risk classifications

- Architectural decision records documenting deployment rationale

- Standards conformance evidence

- Accountability mapping for AI system outcomes

- Documented policies for AI system lifecycle management

Model guardrails operate during inference. Governance frameworks require decisions and documentation before inference begins.

This connects directly to the governance-as-constraints concept from enterprise architecture. Standards using RFC 2119 normative language (MUST, SHOULD, MAY) create enforceable requirements. Architectural decision records provide traceability for why specific AI systems were approved for deployment.

The Diagnostic: Where Is Your Organization?

Most organizations heavily invest in Layer 5 (model controls) and assume it covers Layers 1-4. Here is how to diagnose which governance layers your organization actually has versus which you assume exist.

The Five-Layer Preview:

1. Regulatory Layer: Compliance with NIST AI RMF, eu ai act, iso 42001

2. Organizational Layer: Policies, risk committees, accountability structures

3. Architectural Layer: Standards, ADRs, validation pipelines

4. Application Layer: System-specific governance, integration controls

5. Model Layer: Inference-time filtering, content policies, output validation

Quick Self-Assessment

Ask these questions about your organization's AI governance:

Layer 1 (Regulatory): Can you map your AI systems to NIST AI RMF categories? Do you have documented compliance with applicable regulations?

Layer 2 (Organizational): Does your organization have an AI inventory? A risk classification system for AI use cases? Designated accountability for AI system outcomes?

Layer 3 (Architectural): Do you have documented architectural decisions for AI system deployments? Standards that specify what AI systems MUST, SHOULD, and MAY do? Validation pipelines that enforce governance requirements?

Layer 4 (Application): Does each AI system have documented governance requirements? Integration controls that enforce organizational policies?

Layer 5 (Model): Do your models have inference-time filtering? Content policies? Output validation?

If your answers focus primarily on Layer 5, you have guardrails but not governance. Gartner research confirms that 71% of compliance leaders lack visibility into AI use cases, which indicates missing governance at Layers 1-3.

The diagnostic reveals a pattern: organizations assume vendor dashboards showing green status constitute governance coverage across all layers. In reality, vendor controls operate only at Layer 5.

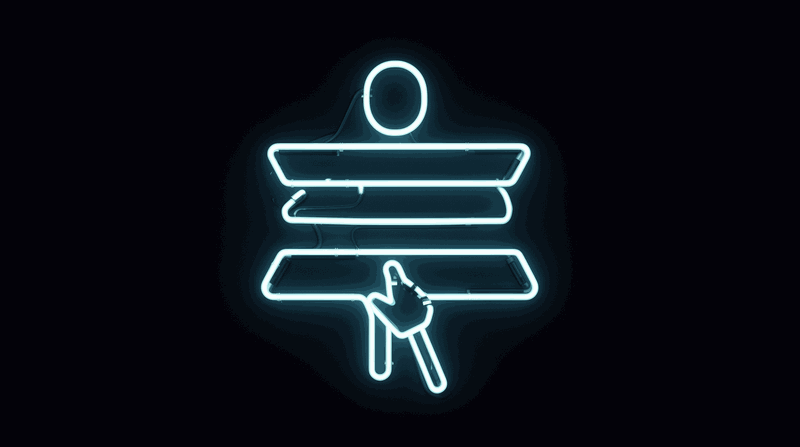

Where Architects Fit in AI Governance

Architects own Layer 3 (Architectural) and influence Layers 2 and 4. This positioning makes architects critical to bridging the governance gap.

Architectural governance artifacts include:

- Architecture repository with AI system documentation

- Standards using RFC 2119 normative language for AI requirements

- Architectural decision records for AI deployment decisions

- Validation pipelines that enforce governance requirements before deployment

These are governance artifacts, not model parameters. They persist independently of vendor changes and provide the documentation foundation that auditors, regulators, and organizational leadership require.

The architect's role in ai governance strategy involves translating organizational policies (Layer 2) into enforceable technical standards (Layer 3) and ensuring application-specific governance (Layer 4) aligns with architectural requirements.

Your Action This Week

Audit your organization's AI governance: list every AI use case, then for each one check whether there is an AI inventory entry, a risk classification, an architectural decision record, and standards conformance evidence.

If the answer is "the vendor dashboard shows green," you have guardrails but not governance.

This audit will reveal which layers exist and which are missing. Most organizations discover they have invested heavily in Layer 5 while assuming it covers Layers 1-4.

Conclusion

Model guardrails control what AI systems output during inference. Enterprise ai governance decides what AI systems should exist, how they are classified by risk, who is accountable for their outcomes, and what standards they must meet.

Both are necessary. Neither substitutes for the other. Architects own the governance layer.

The 63% gap between AI adoption and governance maturity represents organizations with technical controls but no governance structures. Major regulatory frameworks (NIST AI RMF, eu ai act, iso 42001) unanimously position governance upstream from technical controls.

When audit, incident, or regulatory review comes, model guardrails cannot answer "who decided to deploy this system?" or "what risk classification was assigned?" Those questions require governance artifacts that exist independently of vendor dashboards.

Start with the audit. List your AI use cases. Check which governance layers actually exist. The distinction between guardrails and governance is not theoretical. It is the difference between compliance theater and enterprise ai governance that survives scrutiny.

Resources

- Video: Watch on YouTube

- Related: AI Enterprise Architecture Explained: Platform, Applications, and Governance

- Related: Standards That AI Can Follow: Writing Normative Constraints for AI-Assisted Development

- Related: Architecture Decision Records as AI Context: Why Your AI Needs to Know What You've Already Decided

Watch the video for the complete framework and real-world examples: Watch on YouTube

What governance artifacts exist in your organization beyond vendor dashboards? Share your audit findings in the comments.