Introduction

I spent two weeks building retrieval augmented generation infrastructure for a problem that did not require infrastructure. The corpus was 200 pages of personal reference material — roughly 150,000 tokens — and every single token fit inside a single context window. I had built a chunking pipeline, deployed a vector database, tuned retrieval precision, and iterated on query interfaces. Then I measured the corpus, loaded it into context, and replaced the entire system with a single well-constructed prompt. Query latency dropped from 30 seconds to under 3. Infrastructure cost dropped to zero.

This article expands on my video about RAG vs long context windows, where I walk through both that failure and a contrasting success — a technical documentation corpus where RAG was unambiguously the right pattern. The gap between those two experiences produced the Context Fit Test: five diagnostic dimensions that determine which pattern a knowledge retrieval problem actually requires.

This is written for developers and architects who are designing AI-assisted systems and want a structured way to make the RAG-versus-context decision before committing to an implementation. If you have ever reached for the more complex pattern because it felt more serious, this framework is for you.

Why this decision is harder than it looks

The debate framing that circulates in the AI architecture community — "RAG is dead" versus "long context makes retrieval obsolete" — is genuinely unhelpful. It converts a structured trade-off into a tribal allegiance, and architects who get swept into that framing make the same mistake I made: they choose a pattern before they measure the problem.

The "RAG is dead" argument rests on a real observation. Context windows have grown dramatically over the past two years. Corpora that required retrieval infrastructure in 2022 can now fit inside a single inference call. For a meaningful class of problems — bounded, stable, low-query-volume knowledge bases — full-context loading is now the correct answer, and building retrieval infrastructure on top of it is pure overhead. I proved that to myself at some cost.

But the argument typically assumes a bounded corpus. It assumes the data fits, that it is stable, and that token cost at scale is not a constraint. Those assumptions do not hold for the class of problems RAG was designed to solve. An unbounded corpus does not become bounded because context windows grew. A corpus updated weekly does not become static because inference got cheaper. Sub-second retrieval requirements for automated reasoning workflows do not relax because large language models can theoretically hold more tokens in context.

What changed is the threshold — the boundary between "load it in context" and "build retrieval infrastructure" moved. It did not disappear. In my experience, architects making the best decisions right now are not the ones who picked a side. They are the ones running a structured diagnostic before they choose a pattern.

There is also a subtler force at work: complexity feels like rigour. When I built that over-engineered pipeline, I was proud of it. It looked serious. It had moving parts. The instinct to reach for the more sophisticated architecture when a simpler one would serve is not a beginner mistake — it is a persistent professional habit that requires active management. Understanding why each pattern exists, and what conditions it was designed to address, is the only reliable counter to that instinct.

The Context Fit Test: five dimensions that determine the answer

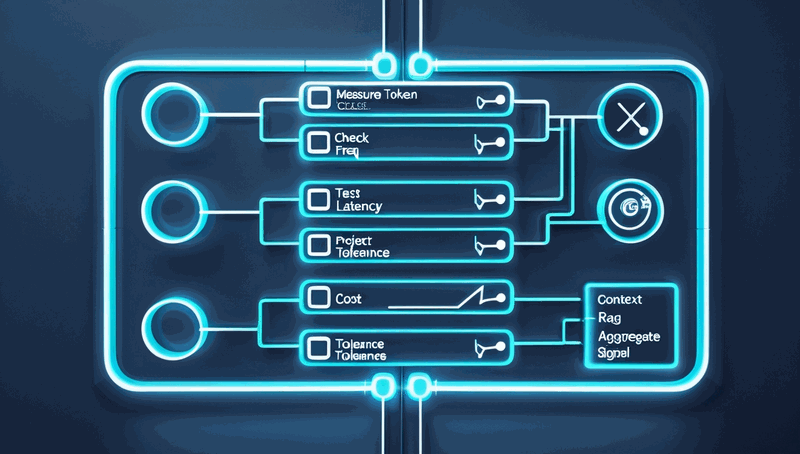

The Context Fit Test is not a formal standard. It is a structured way of forcing yourself to examine the dimensions that actually determine the correct pattern, rather than defaulting to whichever approach you most recently read about. Each dimension produces a directional signal. The aggregate of those signals across all five dimensions gives you the architectural answer — or, when dimensions conflict, identifies the boundary where a hybrid approach is warranted.

Data scope

Data scope is the first and most clarifying question: is the corpus bounded and stable, or unbounded and growing? Bounded means you can enumerate the corpus today and expect it to look roughly the same next month. Unbounded means the corpus is a living thing — documents added, updated, and restructured on a continuous basis.

The personal documentation assistant I over-engineered was bounded. Two hundred pages of accumulated notes that changed rarely. The technical documentation corpus I built RAG for was unbounded — several hundred reference documents growing by new entries weekly, with no ceiling on future size. That single dimension already points strongly toward the answer in both cases. When the corpus is bounded and small enough to fit in context, loading it directly is almost always simpler and faster. When the corpus is unbounded, you cannot load it in context by definition, and retrieval infrastructure is not optional.

Update frequency

Update frequency sharpens the picture that data scope establishes. Static data — data that does not change — can be loaded into context once and reused across queries with no staleness concern. Dynamic data — data that changes frequently — makes full-context loading increasingly expensive and increasingly stale over time.

If your corpus is updated weekly, loading the full corpus on every query means you are either paying enormous token costs on each call or serving information that has drifted from the current state. RAG indexes update incrementally: a new document is embedded and inserted into the index without requiring a full reload. Full-context loading has no equivalent of an incremental update — every change to the corpus requires re-loading the entire context on the next query.

Latency requirement

Latency requirement is where many architects underestimate the structural difference between the two patterns. Full-context loading has a fixed cost: every inference call carries the entire corpus as input tokens, and that cost scales with corpus size. For interactive use cases — a user asking a question and waiting a few seconds for an answer — that latency may be entirely acceptable. For automated reasoning workflows where an AI agent is querying a knowledge source mid-task and expects a relevant result in under one second, the arithmetic changes completely.

RAG retrieval, once the index is built, operates in milliseconds at the retrieval layer. The inference call that follows receives only the retrieved context, not the entire corpus. For agent-based systems where knowledge retrieval is a tool call inside a reasoning loop, sub-second retrieval is frequently a hard constraint, not a preference. Context loading cannot match that at meaningful corpus sizes.

Cost constraint

Token cost for full-context loading scales linearly with corpus size. At 150,000 tokens per query and ten queries per day, the cost is manageable — negligible, even, at current inference pricing. At 10 million tokens per query and thousands of queries per day, the token cost becomes the dominant operational expense of the system. RAG infrastructure carries a fixed cost — embedding compute, index storage, retrieval infrastructure — that does not scale with query volume in the same way. Below a certain corpus size and query volume threshold, RAG costs more than the problem it solves. Above that threshold, it costs less. Knowing roughly where your system sits relative to that threshold is essential before you commit to either pattern.

Accuracy profile

The final dimension is the one that surprises practitioners most. Large language model context windows have a known degradation pattern: the model's ability to attend to information positioned in the middle of a very long context is weaker than its ability to attend to information at the beginning or end. This is sometimes called Lost in the Middle degradation, and it has been documented in published research across multiple model families.

For some use cases, that degradation is acceptable. A slightly imprecise answer drawn from a long personal knowledge base is still useful. For others — particularly agent workflows where a missed relevant document breaks the reasoning chain rather than merely degrading the answer quality — zero tolerance for retrieval miss is a hard requirement. RAG retrieval, when the index and query are well-constructed, surfaces the most relevant document with high precision regardless of corpus size. Full-context loading offers no equivalent guarantee when the corpus is large enough to trigger positional degradation.

Reading the aggregate signal

No single dimension gives you the answer in isolation. The aggregate signal across all five does. In my experience, when four or five dimensions point in the same direction, the architectural choice is clear and the decision should be made with confidence. When two or three dimensions conflict — for example, a bounded but frequently updated corpus with hard latency constraints — you are looking at a hybrid boundary: partial context loading for stable reference material combined with selective retrieval for dynamic content, or tiered approaches that serve different query types through different mechanisms.

The two systems I described map cleanly through this test. The personal documentation assistant scored context-loading on all five dimensions: bounded corpus, static data, no hard latency constraint, negligible token cost at that size, and acceptable imprecision. I built RAG infrastructure anyway, because I had not asked the questions. The technical documentation corpus scored RAG on all five dimensions: unbounded and growing, continuously updated, sub-second hard constraint for agent workflows, prohibitive token cost at scale, and zero tolerance for retrieval miss. The infrastructure cost was justified because the problem required it. Same diagnostic. Opposite answers.

Applying the test before you build

The Context Fit Test is most valuable when applied before a single line of retrieval code is written. In practice, that means treating it as a pre-design checkpoint rather than a post-hoc justification. The fifteen minutes spent scoring your corpus across five dimensions will either confirm the pattern you were already planning or surface a mismatch before it becomes two weeks of infrastructure you do not need.

The practical steps are straightforward. First, measure the corpus. Token count the full dataset — not an estimate, an actual measurement. Many practitioners skip this step because it feels pedantic, but it is the single most clarifying action in the entire diagnostic. A corpus that fits in context with room to spare eliminates the need for further analysis on the data scope and cost dimensions immediately. Second, document the update cadence. Is the corpus static, updated on a defined schedule, or continuously changing? This determines whether incremental indexing is a requirement or an irrelevance. Third, state the latency requirement explicitly. "Fast enough" is not a requirement. "Under one second for agent tool calls" is a requirement. The difference between interactive and automated reasoning latency tolerance is large enough to drive the decision on its own in many cases.

The most common pitfall I observe is treating the diagnostic as optional when the answer feels obvious. If you have been following the AI architecture space closely, RAG feels like the serious pattern and full-context loading feels like the naive one. That instinct is wrong in a significant fraction of cases, and it is expensive when it leads you astray. The diagnostic is not there to confirm what you already believe — it is there to catch the cases where your instinct is pointing in the wrong direction.

A second pitfall is treating the five dimensions as equally weighted in all situations. They are not. For agent-based systems, latency requirement is frequently the dominant dimension and will override a marginal signal from cost or accuracy profile. For batch processing systems where queries run overnight, latency may be irrelevant and cost becomes dominant. Know which dimensions carry the most weight for your specific system before you read the aggregate signal.

The success metric for applying this test is simple: you can state, in one sentence per dimension, where your system scores and why. If you cannot do that, you have not measured the problem — you have assumed it.

Measure the problem before you build the solution

The architectural judgment required to choose between retrieval augmented generation and full-context loading is not primarily about knowing which pattern is better. It is about knowing which conditions each pattern was designed to address — and then measuring your problem against those conditions before you commit to an implementation.

Before I ran that calculation on my 200-page corpus, I was measuring success by how sophisticated the architecture was. After I replaced the pipeline with a single context-loaded call, I started measuring success by whether the architecture matched the problem. Those are genuinely different standards, and the shift between them is, in my experience, one of the more important recalibrations an architect can make.

The five-dimension Context Fit Test — data scope, update frequency, latency requirement, cost constraint, and accuracy profile — is the structured form of that recalibration. Apply it to your next knowledge retrieval design decision. Write down where each dimension points. If four or five align, you have your answer. If they conflict, you have found your hybrid boundary, and that is useful information too.

Your Mileage May Vary — your corpus size, your latency constraints, your cost envelope, and your tolerance for retrieval imprecision are specific to your system. But the five questions are worth asking regardless of where the answers land.

If you are currently running a knowledge retrieval system that you designed without this diagnostic, run it now against what you have already built. You may find the architecture matches the problem precisely. You may find a mismatch worth addressing. Either outcome is better than not knowing.