Introduction

I spent two weeks building retrieval infrastructure for a problem that did not require infrastructure. The corpus was 200 pages of personal reference material — roughly 150,000 tokens — and the entire thing fit inside a single context window. I did not need a retrieval pipeline. I needed a well-constructed prompt. I replaced the pipeline with a single context-loaded call, and query latency dropped from 30 seconds to under 3. Infrastructure cost dropped to zero.

That experience forced me to confront a question I had been avoiding: how do you know, before you build, which knowledge retrieval pattern your problem actually requires? The debate between retrieval augmented generation and long context loading is generating strong opinions right now, and most of those opinions are framed as tribal positions rather than structured trade-offs.

This article expands on my video about RAG vs long context windows, where I walk through both that over-engineered failure and a system where the same retrieval infrastructure I tore down was exactly the right answer. Here, I want to give you the five-dimension diagnostic I now run before every knowledge retrieval design decision — and show you how it maps to both stories. This is aimed at developers and architects who are building or evaluating AI-assisted systems and want a structured way to make the pattern choice before they commit to an implementation.

Why knowledge retrieval pattern selection matters now

Context windows have grown significantly over the past two years. What required retrieval infrastructure in earlier model generations can now, in many cases, fit directly into a single inference call. That is a genuine shift, and it has prompted a wave of commentary suggesting that retrieval augmented generation is becoming obsolete. The argument has surface appeal: if you can load everything into context, why build the indexing and retrieval machinery?

The problem with that framing is that it assumes a bounded, stable corpus. For a significant class of problems, that assumption holds — and for those problems, long context loading is now the correct answer. I proved that to myself with two weeks of engineering work I did not need. But for another class of problems, the assumption does not hold at all, and the "load it in context" argument breaks down quickly when you examine the actual constraints.

An unbounded corpus does not become bounded because context windows grew. A corpus that updates weekly does not become static because inference got cheaper. Sub-second retrieval requirements for automated reasoning workflows do not relax because large language models can theoretically hold more in context. What changed is the threshold — the boundary between "load it in context" and "build retrieval infrastructure" moved. It did not disappear.

The practical consequence is that architects and developers are making pattern choices in an environment where the debate has become polarised. You are either a retrieval augmented generation advocate or a long context advocate, and the other camp is presented as wrong. That is not architectural thinking — that is trend following. Architectural thinking starts with measuring the problem. The five-dimension diagnostic I describe in this article is the structured way I force myself to do that measurement before I choose a pattern.

There is also a subtler risk worth naming. Retrieval augmented generation carries a signal of sophistication. It involves chunking strategies, embedding models, vector indices, retrieval tuning — components that feel like serious engineering. That signal can make the pattern feel like the correct default for anyone building serious AI systems. In my experience, that instinct is one of the more reliable sources of over-engineering in AI system design. Complexity is not a proxy for correctness. Match is.

The Context Fit Test: five dimensions for retrieval pattern selection

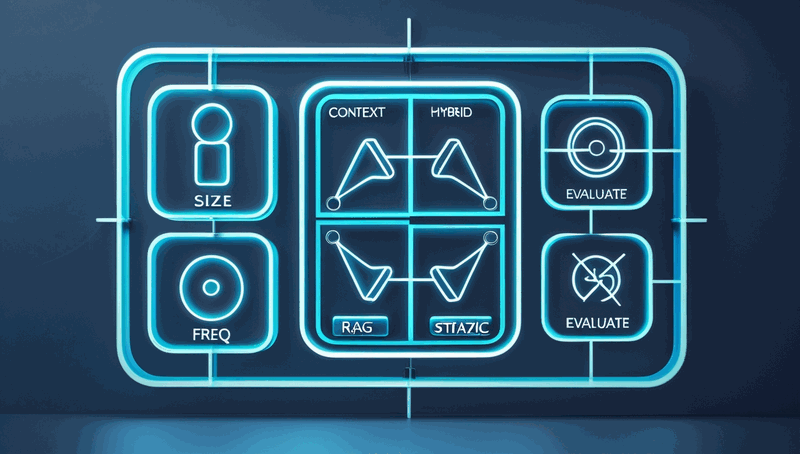

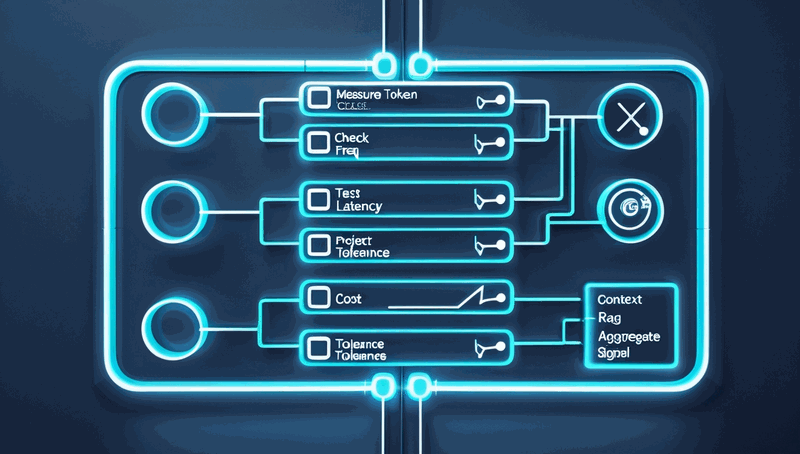

Every knowledge retrieval design decision I have made since those two experiences runs through the same five questions. I call this the Context Fit Test. It is not a formal standard — it is a structured diagnostic that forces you to look at the dimensions that actually determine the answer, rather than defaulting to whichever pattern you most recently read about. The five dimensions are: data scope, update frequency, latency requirement, cost constraint, and accuracy profile.

Data scope

Data scope is the first and most clarifying question: is the corpus bounded and stable, or unbounded and growing? Bounded means you can enumerate the corpus today and expect it to look roughly the same next month. Unbounded means the corpus is a living thing — documents added, updated, and restructured on a continuous basis.

The personal documentation assistant I over-engineered was bounded. Two hundred pages of reference material that I added to occasionally but that was not growing in any systematic way. The technical documentation corpus I built later was unbounded — several hundred documents at the time, with new standards, decision records, and reference patterns being added weekly, and no ceiling in sight. That single dimension already points strongly toward the answer in both cases. Bounded corpora are candidates for context loading. Unbounded corpora are candidates for retrieval infrastructure.

The reason this dimension is so clarifying is that it determines whether the architectural choice is durable. A bounded corpus that fits in context today will still fit in context next month. An unbounded corpus that fits in context today may not fit next quarter — and even if it does, loading the full corpus on every query means your per-query token cost scales with corpus growth, indefinitely.

Update frequency

Update frequency sharpens the picture that data scope establishes. Static data — data that does not change — can be loaded into context once and reused. Dynamic data — data that changes frequently — makes full-context loading increasingly expensive and increasingly stale. If your corpus is updated weekly, loading the full corpus on every query means you are either paying enormous token costs or serving outdated information. Retrieval augmented generation indexes update incrementally; context loading does not.

There is a practical nuance here worth noting. Some corpora are nominally dynamic but practically static — a document set that is updated once per quarter, for example, may still be a candidate for context loading if the corpus size permits it. The question is not whether updates happen, but whether the frequency and volume of updates make incremental indexing materially more efficient than periodic full reloads. In my experience, weekly or more frequent updates tip the balance toward retrieval infrastructure.

Latency requirement

Latency requirement is where many system designers underestimate the difference between the two patterns. Full-context loading has a cost: you are passing potentially hundreds of thousands of tokens into every inference call, and the model must process that context before generating a response. For interactive use cases — a human querying a documentation assistant — that latency may be entirely acceptable. For automated reasoning workflows where an AI agent is querying mid-task, expecting a relevant document back in under one second, full-context loading cannot compete.

The technical documentation corpus I described in the video was serving exactly that use case: AI agents querying on demand, mid-task, with a hard sub-second retrieval constraint. A missed retrieval or an exceeded latency budget did not just produce a worse answer — it broke the reasoning chain entirely. Retrieval augmented generation, once the index is built, returns in milliseconds. That gap is not closeable by optimising the inference call; it is structural.

If your latency requirement is interactive and human-paced, this dimension may not differentiate the two patterns significantly. If your latency requirement is machine-paced and hard-constrained, it almost certainly does.

Cost constraint

Cost constraint is the economic dimension of the decision, and it operates differently depending on query volume. Token cost for full-context loading scales linearly with corpus size: every query pays the full corpus cost in tokens. If your corpus is 150,000 tokens and you run ten queries per day, that cost is negligible. If your corpus is 10 million tokens and you run thousands of queries per day, the token cost becomes the dominant operational expense.

Retrieval augmented generation infrastructure has a different cost structure: a fixed cost to build and maintain the index, and a per-query cost that reflects only the retrieved documents — typically a small fraction of the full corpus. At sufficient query volume and corpus size, retrieval augmented generation is cheaper to operate than full-context loading. Below a threshold, the infrastructure cost exceeds the token savings. Knowing which side of that threshold your system sits on is essential before you commit to either pattern.

I want to be direct about something here: the cost threshold is not fixed. It shifts as model pricing changes, as context window sizes grow, and as retrieval infrastructure tooling matures. The calculation I ran two years ago may give a different answer today. Run the numbers for your current cost environment, not for the environment that existed when you last evaluated this decision.

Accuracy profile

Accuracy profile is the final dimension, and in my experience it is the one that surprises people most. Large language models have a known degradation pattern in very long contexts — the model's ability to attend to information in the middle of a long context is weaker than its ability to attend to information at the beginning or end. This is sometimes called "lost in the middle" degradation, and it is a documented characteristic of current model architectures.

For some use cases, that degradation is acceptable. A slightly imprecise answer from a personal documentation assistant is still useful — the user can follow up, rephrase, or tolerate some miss rate. For other use cases, like the agent workflow I described, a missed relevant document is a failure, not an approximation. The agent's reasoning chain depended on receiving the precise relevant document; receiving a near-miss or missing the document entirely broke the task.

Know your tolerance before you choose your pattern. If your use case requires high precision retrieval — where a miss is a failure rather than an approximation — retrieval augmented generation's targeted semantic search has a structural advantage over full-context loading at scale.

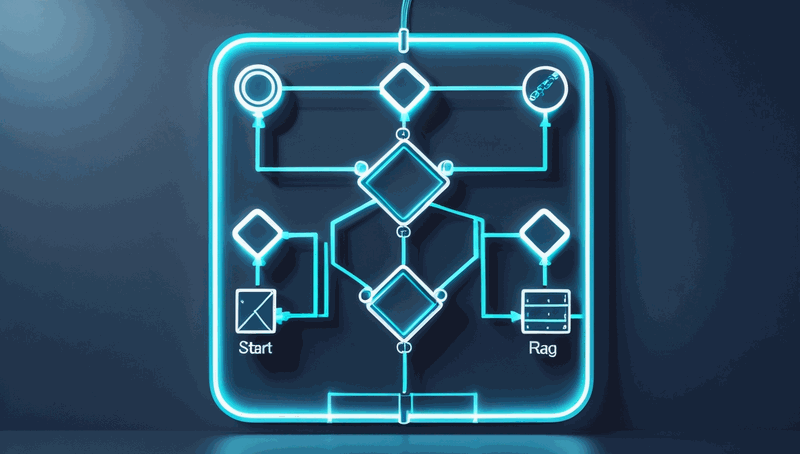

Reading the aggregate signal

No single dimension gives you the answer. The aggregate signal across all five does. In my experience, when four or five dimensions point in the same direction, the architectural choice is clear. When two or three dimensions conflict, you are looking at a hybrid — partial context loading combined with selective retrieval, or tiered approaches that serve different query types differently.

The two stories from the video illustrate this cleanly. The personal documentation assistant: bounded corpus, static data, interactive latency, negligible token cost, acceptable imprecision. All five dimensions pointed toward context loading. The technical documentation corpus: unbounded and growing, continuous updates, sub-second hard constraint, prohibitive scaling token cost, zero tolerance for retrieval miss. All five dimensions pointed toward retrieval augmented generation. Same diagnostic, opposite answers.

One pattern worth watching for: when a corpus starts bounded and static but has a credible growth trajectory, the Context Fit Test answer today may not be the answer in eighteen months. Design for where the corpus is going, not just where it is now. Our post on measuring before you build in AI system design goes deeper on this trajectory thinking.

Applying the diagnostic before you build

The Context Fit Test is most valuable when you run it before you write a single line of retrieval code — not after you have already committed to a pattern and are looking for validation. The practical application is straightforward: for each of the five dimensions, write down where your system sits and which pattern that dimension favours. Then read the aggregate.

Start with data scope and update frequency together, since they are closely related. Measure your corpus size in tokens — most tokenisation tools will give you this in minutes — and compare it against the context window available to you. If it fits with room to spare, and the corpus is stable, you have a strong signal toward context loading. If it does not fit, or if it fits today but has a clear growth trajectory, you have a signal toward retrieval infrastructure. Document this measurement explicitly; it is the single most clarifying data point in the diagnostic.

For latency requirement, identify whether your system serves human-paced interactive queries or machine-paced automated workflows. If it is the latter, establish the actual latency budget in milliseconds and test whether full-context inference can meet it under realistic load. Do not assume — measure. I have seen systems where the latency assumption was wrong in both directions.

For cost constraint, calculate the token cost of full-context loading at your expected query volume and compare it against the infrastructure cost of a retrieval index. Use current pricing, not historical estimates. If the corpus is growing, project that cost forward twelve months.

Common pitfalls

The most common pitfall I see is running the diagnostic in reverse — choosing a pattern first and then finding evidence to support it. Retrieval augmented generation carries a sophistication signal that makes it easy to rationalise. If you find yourself listing reasons why your bounded, static, low-volume corpus "really" needs retrieval infrastructure, treat that as a warning sign.

The second pitfall is treating the diagnostic as a one-time decision. Corpora grow. Query volumes change. Model pricing shifts. The answer that was correct at system inception may not be correct eighteen months later. Build a review trigger into your system design — a corpus size threshold or a query volume threshold that prompts you to re-run the diagnostic. Our post on architectural decision-making frameworks covers how to structure these review triggers as part of a broader decision record practice.

Success looks like this: you can articulate, for each of the five dimensions, where your system sits and why. You have a documented measurement of corpus size. You have a tested latency figure under realistic load. You have a cost projection at expected query volume. If you have those four artefacts, you have made the decision with evidence rather than instinct.

Closing the argument

The debate between retrieval augmented generation and long context loading is not going to resolve into a single correct answer, because the two patterns solve different problems. What I hope this diagnostic gives you is a way to stop engaging with the debate as a tribal choice and start engaging with it as a structured trade-off analysis.

The threshold between the two patterns moved as context windows grew. Some systems that genuinely required retrieval infrastructure two years ago are now better served by context loading. Others remain firmly in retrieval augmented generation territory because the corpus is unbounded, dynamic, and precision-constrained. The diagnostic tells you which category your system belongs to — and it tells you before you spend two weeks building infrastructure you do not need.

Before your next knowledge retrieval design decision, take fifteen minutes and run the Context Fit Test across all five dimensions. Write down where each dimension points. If four or five align, you have your answer. If they conflict, you have found your hybrid boundary. The five questions apply regardless of where the answers land.

Your Mileage May Vary — your corpus size, your latency constraints, your cost envelope, and your accuracy tolerance are specific to your system and your context. But measuring the problem before choosing the pattern is a discipline that applies universally. Watch the video for the personal story behind this analysis: Watch on YouTube. I would be interested to hear in the comments whether the five dimensions surface a different answer than the pattern you were already planning to build.

Resources

- Companion video: RAG vs long context windows

- Related post: Architectural decision-making frameworks

- Related post: Measuring before you build in AI system design