Introduction

AI requirements expose a structural gap that traditional requirements practice was never designed to see. A system can pass every review, satisfy every acceptance criterion, and still go live producing decisions that nobody specified, constrained, or governed. That gap does not appear in a standard requirements template because traditional templates were built for systems that either do what you specified or do not. AI systems operate differently: they produce outputs across a range of values, and some of those outputs are wrong in ways that look almost right.

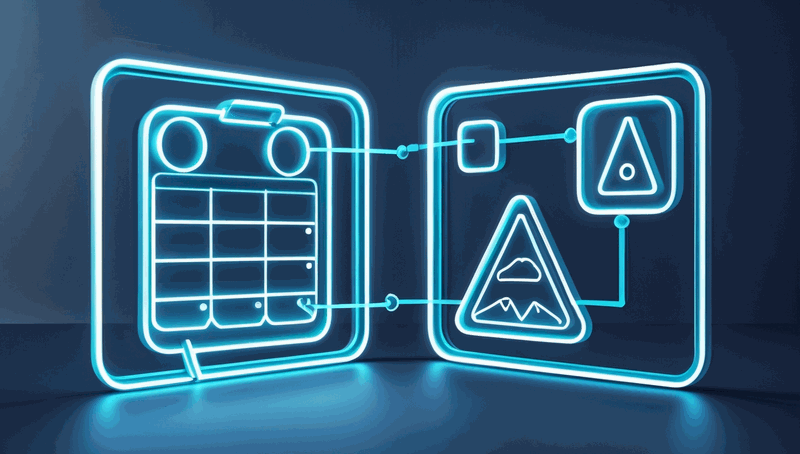

This article expands on my video about AI architecture for business analysts, where I work through two production failures in detail and derive two frameworks from them. Here, I develop those frameworks with enough depth for immediate application: the AI Requirements Triangle, which extends your existing requirements practice to capture three governance categories that traditional templates omit, and the Constraint Register, a cross-layer artifact that keeps business rules, architecture decisions, and engineering implementations aligned to the same governance outcome.

This is written for business analysts working on AI delivery projects, and for architects who review requirements specifications and want to understand where the governance surface tends to collapse.

Why traditional requirements practice misses AI governance

The completeness model that most requirements practitioners use was built for deterministic systems. A deterministic system has a binary failure mode: it either produces the specified output or it does not. That model makes functional completeness a meaningful measure of specification quality. If every capability is specified, and every acceptance criterion is met, the system is complete.

AI systems break this model in a specific way. They produce outputs probabilistically, and some of those outputs are incorrect in ways that the system cannot self-identify. The output field is populated. The confidence indicator may be high. The system has technically met its functional requirement. What nobody has specified is what happens when that output is wrong, how wrong it is permitted to be, and who is responsible for catching the error. From inside a traditional completeness check, that specification looks finished. The gap is invisible because the template has no field for it.

In my experience, this is not a failure of individual practitioners. I have reviewed requirements documents that were detailed, well-structured, and genuinely thorough by every measure I knew how to apply, and still missed the same three categories every time. The gap is structural. Traditional requirements templates prompt you to specify what a system does. AI systems also require specifications for what they are allowed to get wrong, what they must know before producing output, and when they must stop and defer to a human. Those three categories are not edge cases or additions to good practice. They are the governance surface that AI systems require and that traditional templates do not surface.

There is a second failure mode that compounds the first. Even when a business analyst writes a governance requirement correctly, the constraint often loses fidelity as it moves from the requirements document into the architecture design and from the design into the engineering implementation. Each layer uses its own vocabulary. The business rule that says "the system should not recommend policy-constrained items to ineligible users" becomes a "content filtering policy" in the architecture document and an "exclusion control mechanism" in the implementation. Three phrases. One constraint. No explicit mapping between them. When an audit arrives, the team reconstructs the traceability retrospectively, at considerable cost.

Both failure modes are requirements failures, not engineering failures. The engineering team builds what is specified. What is specified is incomplete. That distinction matters because it locates the solution in the right place: the specification layer, before design begins.

The AI Requirements Triangle and the Constraint Register

The AI Requirements Triangle

The AI Requirements Triangle is a three-category extension to the requirements practice you already have. It does not replace functional requirements, non-functional requirements, or user stories. For every AI feature in your specification, it adds three companion specifications that traditional templates do not prompt you to write.

Acceptable error boundary. This is the specification of what output variance is tolerable, under what conditions, and what the system must do when it produces output outside that range. It is not a quality target in the traditional sense. A traditional quality target specifies that a system must achieve a defined accuracy level. An acceptable error boundary specifies: when the system is wrong in this specific way, this is the required fallback behaviour. It treats incorrectness as an expected system state that requires a specified response, not as a failure mode to be eliminated through engineering alone.

Consider a document processing system that uses generative AI to classify inbound business correspondence and route it to the appropriate team. A functional requirement specifies that the system must classify each document and populate an output field. An acceptable error boundary specification adds: for documents in this category, when the model's confidence score falls below a defined threshold, the document must be routed to a secondary review queue rather than processed automatically. That sentence is not an engineering constraint. It is a business decision about where the system is permitted to operate autonomously and where human review is required. Without it, the engineering team has no basis for implementing a fallback. The system routes every document automatically, because that is what the functional requirement specified.

In my experience, writing the acceptable error boundary first in any requirements session forces a stakeholder conversation that would otherwise happen after the first production incident. The question "what is this system allowed to get wrong?" is uncomfortable. It is also the most important question a business analyst can ask about an AI feature.

Context retrieval specification. This is the specification of what data must be retrievable at inference time for the system's output to be considered trustworthy. AI systems do not reason from a vacuum. They reason from context, and if that context is incomplete, outdated, or unavailable, the output may be technically produced but practically unreliable. Traditional requirements capture what a system outputs. Context retrieval specifications capture what the system must have access to before it is permitted to output.

When I built a retrieval pipeline for an architecture document repository, I discovered this gap through failure rather than foresight. Certain query types returned outputs that were confidently wrong because the relevant documents had not been ingested into the corpus yet. The system did not know what it did not know. A context retrieval specification would have required me to define the minimum corpus completeness before the system was permitted to answer questions in each domain. Instead, I learned the requirement by observing the failure. The specification gap was mine, not the engineering team's.

For a business analyst, the context retrieval specification surfaces integration requirements that would otherwise appear as surprises during testing. If the AI feature requires access to a user profile, a product catalogue, and a regulatory constraint list at inference time, those three data dependencies need to be specified before design begins, not discovered when the system produces a confidently wrong recommendation because one of them was unavailable.

Human escalation trigger. This is the specification of the conditions under which the system must stop producing output autonomously and defer to a human. It is the autonomy boundary: the explicit limit of the system's permitted decision-making authority. In my experience, this is the requirement that gets written last, if it gets written at all, because it requires the business to make an explicit decision about where they trust the system and where they do not. That conversation is uncomfortable. It is also the most significant AI risk management requirement in any AI specification, because without it, the system has no defined limit to its autonomous operation.

Apply the triangle by adding three companion specifications for every AI feature before it enters design. Write the acceptable error boundary first: it forces the stakeholder conversation about what wrong looks like. Write the context retrieval specification second: it surfaces integration dependencies early. Write the human escalation trigger last: it closes the governance loop by naming the conditions under which human judgement replaces automated output. The three specifications together define the governance surface that the AI feature requires. Without them, the functional requirements are complete in the traditional sense and incomplete in the sense that matters for AI delivery.

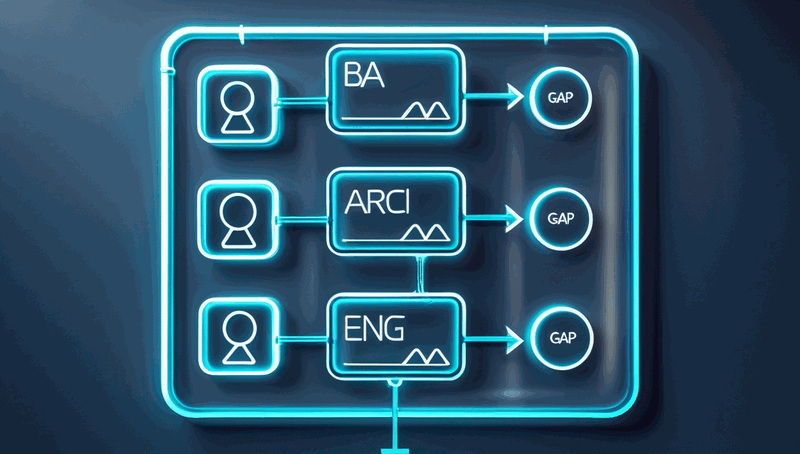

The Constraint Register

The Constraint Register resolves the second failure mode: the vocabulary mismatch that causes governance requirements to lose fidelity as they move between layers. It is a single document that traces every governance requirement from the business rule the BA stated, through the design decision the architect made, to the implementation mechanism the engineer built, with a named owner at each layer.

The structure is deliberately simple. Four columns: the governance requirement as the BA stated it, the design decision the architect made to address it, the implementation mechanism the engineer built, and a named owner at each layer who confirms the translation is complete. One row per constraint. One document shared across all three layers.

The register does three things that no individual deliverable achieves on its own.

It makes vocabulary mismatches visible before they become audit findings. When the BA's "should not recommend policy-constrained items" and the architect's "content filtering policy at the AI application delivery tier" appear on the same row, a reviewer can immediately assess whether the design decision addresses the business rule or whether there is a gap in the translation. The mismatch is visible in the document, not hidden in the space between documents.

It creates shared vocabulary across the delivery team. Once the register exists, all three layers have a single reference point for what each governance requirement means at each implementation stage. The BA, the architect, and the engineer are no longer working from their own vocabulary in their own documents. They are working from a shared constraint definition that all three layers have agreed on. In my experience, this single change reduces cross-functional misunderstanding in AI delivery more than any other process intervention I have applied.

It makes compliance preparation a documentation task rather than an investigation. When an auditor asks how a specific business rule was implemented, the answer is one row in the register, with three named owners and three linked artifacts. The reconstruction work that took weeks on the advisory system project I described in the video takes hours when the traceability is built into the workflow from the start.

Build the register at the start of the project. Add a row for every governance requirement as it is identified, whether that happens in a requirements workshop, an architecture review, or an engineering design session. Assign named owners at each layer before the deliverable is built. The register is not a retrospective document. It is a live artifact that grows with the project and provides the audit trail as a byproduct of normal delivery practice, not as a separate governance effort.

How the two frameworks relate

The AI Requirements Triangle and the Constraint Register address two different failure modes. The triangle prevents governance gaps from forming inside the requirements specification by adding the three categories traditional templates omit. The register prevents governance gaps from forming in the translation between layers by making vocabulary mismatches visible and traceability explicit. Together, they cover the two most consistent failure patterns in enterprise AI delivery. A project that applies both is specifying what the system is allowed to get wrong, what it must know before answering, and when it must stop, and it is ensuring those specifications survive intact through architecture design and engineering implementation.

Applying both frameworks in practice

The most effective entry point is a single AI feature already in your backlog. Before the feature enters design, open a blank document and write three companion specifications using the AI Requirements Triangle. Write the acceptable error boundary first: state what output variance is tolerable, under what conditions, and what fallback behaviour is required when the system exceeds that variance. Write the context retrieval specification second: list every data source the system must have access to at inference time for its output to be trustworthy, and specify what happens if any of those sources are unavailable. Write the human escalation trigger last: state the explicit conditions under which the system must stop producing output autonomously and route to a human.

When you have written all three, compare them against your existing functional requirements for that feature. In my experience, the first time a business analyst applies this to an AI feature, at least one of the three companion specifications reveals a decision that had been implicitly delegated to the engineering team without being stated as a business decision. That implicit delegation is the gap. Making it explicit is the work.

For the Constraint Register, start with a shared document that all three layers can access and edit. Add one row for each governance requirement identified in the requirements workshop. At the architecture review, the architect adds the design decision column for each row. At the engineering design session, the engineer adds the implementation mechanism column and confirms the named owner. The register does not require a dedicated governance process. It requires that three conversations that would happen anyway, the requirements workshop, the architecture review, and the engineering design session, produce a shared artifact rather than three separate documents.

Two pitfalls are worth naming. The first is treating the acceptable error boundary as a quality target rather than a fallback specification. A quality target says the system must achieve a defined accuracy level. A fallback specification says what the system does when it does not. Both are needed. They are not the same thing. The second pitfall is building the Constraint Register retrospectively. A register built after the fact requires the reconstruction work it was designed to prevent. Its value is entirely in being built forward, as governance requirements are identified, not backward, as audit findings are investigated.

Success looks like this: the first audit question about a governance requirement is answered by pointing to a row in the register, not by scheduling a retrospective investigation. That is the measure worth tracking.

Closing the governance gap before production opens it

Responsible AI delivery is teaching the industry that governance is a requirements discipline, not an audit discipline. That is a significant shift in where accountability sits. Business analysts are the practitioners best positioned to lead it, because governance requirements live in the specification layer. If they are not written there, they are not written anywhere that matters before the system goes live.

The AI Requirements Triangle and the Constraint Register are not complex frameworks. Their value is in making visible what traditional practice leaves implicit: the three governance categories that AI systems require and that standard templates do not surface, and the cross-layer traceability that prevents those categories from losing fidelity between the BA's sentence, the architect's design, and the engineer's implementation.

In my experience, the gap is consistent across enterprise AI delivery contexts. The specific domain, the team structure, and the governance culture all differ. The underlying failure mode does not. Systems go live with unspecified error boundaries, undocumented context dependencies, and absent escalation triggers, because the requirements template had no field for them and nobody asked the three questions that would have surfaced them.

Take fifteen minutes before your next requirements session on an AI feature and write the three companion specifications for one feature already in your backlog. Your Mileage May Vary on how many gaps you find, but in my experience, the exercise always surfaces at least one requirement that was never going to be written any other way. If you have applied either framework on a project, I would be interested to hear what it surfaced. Share your experience in the comments.